The Story of Soundex

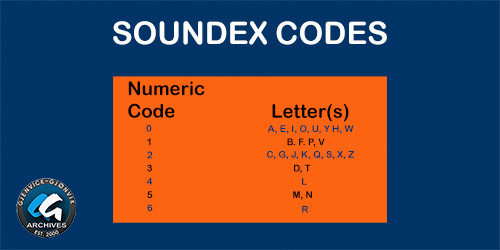

Soundex Codes -- Numeric Code with Associated Letter(s) of the English Alphabet. | GGA Image ID # 1cf8fcace1

Originally developed by Margaret K. Odell and Robert C. Russel at the U.S. Bureau of Archives to simplify census taking in the early 1900s, the Soundex algorithm isn’t perfect but works quite well to overcome the problem of alternative spellings of Names.

The Soundex Algorithm makes the following assumptions about words in English:

- Vowels contribute less to the sound of the word than consonants do and may there fore be disregarded unless they occur at the beginning of the word.

- The Letters H, W, and Y make only minor contributions to the sounds of most words and therefore can also be disregarded again, unless they occur in the beginning of the word.

- Similar sounding consonants that appear together in a word sound a lot like single consonants, and for analytical purposes can be reduced to a single consonant sound.

- Soundex reduces any word into a four digit code that quantifies how that word is pronounced. If the codes for the two words match, then the words sound roughly the same.

The SOUNDEX system provided for variant spelling or misspelling of a name. Phonetic Compression Schemes such as SOUNDEX cannot generally provide for random errors such as typing an incorrect character, or transpositions (where the order of the letters is reversed).

Overview

Soundex is a phonetic algorithm for indexing names by sound, as pronounced in English. The goal is for homophones to be encoded to the same representation so that they can be matched despite minor differences in spelling. The algorithm mainly encodes consonants; a vowel will not be encoded unless it is the first letter. Soundex is the most widely known of all phonetic algorithms, as it is a standard feature of MS SQL and Oracle, and is often used (incorrectly) as a synonym for "phonetic algorithm". Improvements to Soundex are the basis for many modern phonetic algorithms.

History

Soundex was developed by Robert C. Russell and Margaret K. Odell and patented in 1918 and 1922. A variation called American Soundex was used in the 1930s for a retrospective analysis of the US censuses from 1890 through 1920. The Soundex code came to prominence in the 1960s when it was the subject of several articles in the Communications and Journal of the Association for Computing Machinery, and especially when described in Donald Knuth's The Art of Computer Programming.

The National Archives and Records Administration (NARA) maintains the current rule set for the official implementation of Soundex used by the U.S. Government. These encoding rules are available from NARA, upon request, in the form of General Information Leaflet 55, "Using the Census Soundex".

Soundex variants

A similar algorithm called "Reverse Soundex" prefixes the last letter of the name instead of the first.

The NYSIIS algorithm was introduced by the New York State Identification and Intelligence System in 1970 as an improvement to the Soundex algorithm. NYSIIS handles some multi-character n-grams and maintains relative vowel positioning, whereas Soundex does not.

Daitch–Mokotoff Soundex (D–M Soundex) was developed in 1985 by genealogist Gary Mokotoff and later improved by genealogist Randy Daitch because of problems they encountered while trying to apply the Russell Soundex to Jews with Germanic or Slavic surnames (such as Moskowitz vs. Moskovitz or Levine vs. Lewin).

D–M Soundex is sometimes referred to as "Jewish Soundex" or "Eastern European Soundex", although the authors discourage the use of these nicknames. The D–M Soundex algorithm can return as many as 32 individual phonetic encodings for a single name. Results of D-M Soundex are returned in an all-numeric format between 100000 and 999999. This algorithm is much more complex than Russell Soundex.

As a response to deficiencies in the Soundex algorithm, Lawrence Philips developed the Metaphone algorithm in 1990 for the same purpose. Philips developed an improvement to Metaphone in 2000, which he called Double Metaphone. Double Metaphone includes a much larger encoding rule set than its predecessor, handles a subset of non-Latin characters, and returns a primary and a secondary encoding to account for different pronunciations of a single word in English.

Useful Guide to the Soundex System

The Soundex filing system, alphabetic for the first letter of surname and numeric thereunder as indicated by divider cards, keeps together names of the same and similar sounds but of variant spellings.

To search for a particular name, you must first work out the code number for the individual's surname. No number is assigned to the first letter of the surname. If the name is Kuhne, for example, the index card will be in the "K" segment of the index. The code number for Kuhne, worked out according to the system below, is 500.

Soundex Coding Guide

| Code Key | Letters and Equivalents |

|---|---|

| 1. | b.p.f.v |

| 2. | c.s.k.g.j.q.x.z |

| 3. | d.t |

| 4. | l |

| 5. | m,n |

| 6. | r |

The letters a, e, i, o, u, y, w, and h are not coded. The first letter of the surname is not coded.

Every Soundex number must be a 3-digit number. A name yielding no code numbers, as Lee, would thus be L-000; one yielding only one code number would have two zeros added, as Kuhne, coded as K-500; and one yielding two code numbers would have one zero added, as Ebell, coded as E-140. Not more than three digits are used, so Ebelson would be coded as E-142, not E-1425.

When two key letters or equivalents appear together, or one key letter immediately follows or precedes an equivalent, the two are coded as one letter, by a single number, as follows: Kelly, coded as K-400; Buerck, coded as B-620, Lloyd, coded as L-300; and Schaefer, coded as S-160.

If several surnames have-the same code, the cards for them are arranged alphabetically by given name. There are divider cards showing most code numbers, but not all. For instance, one divider may be numbered 350 and the next one 400. Between the two divider cards there may be names coded 353, 350, 360, 365, and 355, but instead of being in numerical order they are interfiled alphabetically by given name.

Such prefixes to surnames as "van," "Von," "Di," "de," "le," "Di," "D'," "dela," or "du" are sometimes disregarded in alphabetizing and in coding.

The following names are examples of Soundex coding and are given only as illustrations.

| Name | Letters Coded | Soundex Code |

|---|---|---|

| Allricht | l.r.c. | A-462 |

| Eberhard | b.r.r | E-166 |

| Engebrethson | n.g.b. | E-521 |

| Heimbach | m.b.c | H-512 |

| Hanselmann | n.s.l. | H-524 |

| Henzelmann | n.z.l. | H-524 |

| Hildebrand | l.d.b. | H-431 |

| Kavanagh | v.n.g. | K-152 |

| Lind. Van | n.d. | L-530 |

| Lukaschowsky | k.s.s. | L-222 |

| McDonnell | c.d.n. | M-235 |

| McGee | c | M-200 |

| O'Brien | b.r.n. | 0-165 |

| Opnian | p.n.n. | 0-155 |

| Oppenheimer | p.n.m. | 0-155 |

| Riedemanas | d.m.n. | R-355 |

| Zita | t | Z-300 |

| Zitzmeinn | t.z.m. | Z-325 |

Native Americans, Orientals, and Religious Nuns

Researchers using the Soundex system to locate religious nuns or persons with American Indian or oriental names should be aware of the way such names were coded. Variations in coding differed from the normal coding system.

Phonetically spelled oriental and Indian names were sometimes coded as if one continuous name, or, if a distinguishable surname was given, the names were coded in the normal manner. For example, the American Indian name Shinka-Wa-Sa may have been coded as "Shinka" (S-520) or "Sa" (S-000). Researchers should investigate the various possibilities of coding such names.

Religious nun names were coded as if "Sister" were the surname, and they appear in the Soundex indexes under the code "S-236." Within the code S-236, the names may not be in alphabetical order.